In which the spectre of the Luddite software engineer is raised, in an AI-driven future where programming languages become commercially redundant, and therefore take on new cultural significance.

In 1812, Lord Byron dedicated his first speech in the House of Lords to the defence of the machine breakers, whose violent acts against the machines replacing their jobs prefigured large scale trade unionism. We know these machine breakers as Luddites, a movement lead by the mysterious, fictional character of General Ludd, although curiously, Byron doesn’t refer to them as such in his speech. With the topic of post-work in the air at the moment, the Luddite movements are instructive; The movement was comprised of workers finding themselves replaced by machines, left not in a post-work Utopia, but in a state of destitution and starvation. According to Hobsbawm (1952), if Luddites broke machines, it was not through a hatred of technology, but through self-preservation. Indeed, when political economist David Ricardo (1921) raised “the machinery question” he did so signalling a change in his own mind, from a Utopian vision where the landlord, capitalist, and labourer all benefit from mechanisation, to one where reduction in gross revenue hits the labourer alone. Against the backdrop of present-day ‘disruptive technology’, the machinery question is as relevant as ever.

A few years after his speech, Byron went on to father Ada Lovelace, the much celebrated prototypical software engineer. Famously, Ada Lovelace cooperated with Charles Babbage on his Analytical Engine; Lovelace exploring abstract notions of computation at a time when Luddites were fighting against their own replacement by machines. This gives us a helpful narrative link between mill workers of the industrial revolution, and software engineers of the information revolution. That said, Byron’s wayward behaviour took him away from his family, and he deserves no credit for Ada’s upbringing. Ada was instead influenced by her mother Annabella Byron, the anti-slavery and women’s rights campaigner, who encouraged Ada into mathematics.

Today, general purpose computing is becoming as ubiquitous as woven fabric, and is maintained and developed by a global industry of software engineers. While the textile industry developed out of worldwide practices over millennia, deeply embedded in culture, the software industry has developed over a single lifetime, the practice of software engineering literally constructed as a military operation. Nonetheless, the similarity between millworkers and programmers is stark if we consider weaving itself as a technology. Here I am not talking about inventions of the industrial age, but the fundamental, structural crossing of warp and weft, with its extremely complex, generative properties to which we have become largely blind since replacing human weavers with powerlooms and Jacquard devices. As Ellen Harlizius-Klück argues, weaving has been a digital art from the very beginning.

Software engineers are now threatened under strikingly similar circumstances, thanks to breakthroughs in Artificial Intelligence (AI) and “Deep Learning” methods, taking advantage of the processing power of industrial-scale server farms. Jen-Hsun Hu, chief executive of NVIDIA who make some of the chips used in these servers is quoted as saying that now, “Instead of people writing software, we have data writing software”. Too often we think of Luddites as those who are against technology, but this is a profound misunderstanding. Luddites were skilled craftspeople working with technology advanced over thousands of years, who only objected once they were replaced by technology. Deep learning may well not be able to do everything that human software engineers can do, or to the same degree of quality, but this was precisely the situation in the industrial revolution. Machines cannot make the same woven structures as hands, to the same quality, or even at the same speed at first, but the Jacquard mechanism replaced human drawboys anyway.

As a thought experiment then, let’s imagine a future where entire industries of computer programmers are replaced by AI. These programmers would either have to upskill to work in Deep Learning, find something else to do, or form a Luddite movement to disrupt Deep Learning algorithms. The latter case might even seem plausible when we recognise the similarities between the Luddite movement and Anonymous, both outwardly disruptive, lacking central organisation, and lead by an avatar: General Ludd in the case of the Luddites, and Guy Fawkes in the case of Anonymous.

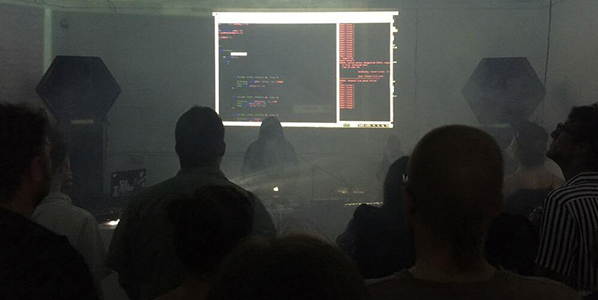

Let’s not dwell on Anonymous though. Instead try to imagine a Utopia in which current experiments in Universal Basic Income are proved effective, and software engineers are able to find gainful activity without the threat of destitution. The question we are left with then is not what to do with all the software engineers, but what to do with all the software? With the arrival of machine weaving and knitting, many craftspeople continued hand weaving and handknitting in their homes and in social clubs for pleasure rather than out of necessity. This was hardly a surprise, as people have always made fabric, and indeed in many parts of the world handweaving has remained the dominant form of fabric making. Through much of the history of general purpose computing however, any cultural context for computer programming has been a distant second to its industrial and military contexts. There has of course been a hackerly counter-culture from the beginning of modern-day computing, but consider that the celebrated early hackers in MIT were funded by the military while Vietnam flared, and the renowned early Cybernetic Serendipity exhibition of electronic art included presentations by General Motors and Boeing, showing no evidence of an undercurrent of political dissent. Nonetheless, I think a Utopian view of the future is possible, but only once Deep Learning renders the craft of programming languages useless for such military and corporate interests.

Looking forward, I see great possibilities. All the young people now learning how to write code for industry may find that the industry has disappeared by the time they graduate, and that their programming skills give no insight into the workings of Deep Learning networks. So, it seems that the scene is set for programming to be untethered from necessity. The activity of programming, free from a military-industrial imperative, may become dedicated almost entirely to cultural activities such as music-making and sculpture, augmenting human abilities to bring understanding to our own data, breathing computational pattern into our lives. Programming languages could slowly become closer to natural languages, simply by developing through use while embedded in culture. Perhaps the growing practice of Live Coding, where software artists have been developing computer languages for creative coding, live interaction and music-making over the past two decades, are a precursor to this. My hope is that we will begin to think of code and data in the same way as we do of knitting patterns and weaving block designs, because from my perspective, they are one and the same, all formal languages, with their structures intricately and literally woven into our everyday lives.

So in order for human cultures to fully embrace the networks and data of the information revolution, perhaps we should take lessons from the Luddites. Because they were not just agents of disruption, but also agents against disruption, not campaigning against technology, but for technology as a positive cultural force.

This article was written by Alex while sound artist in residence in the Open Data Institute, London, as part of the Sound and Music embedded programme.